International graduate student conducts research for a multi-modal emotion recognition model

Maria Gomez | June 9, 2020

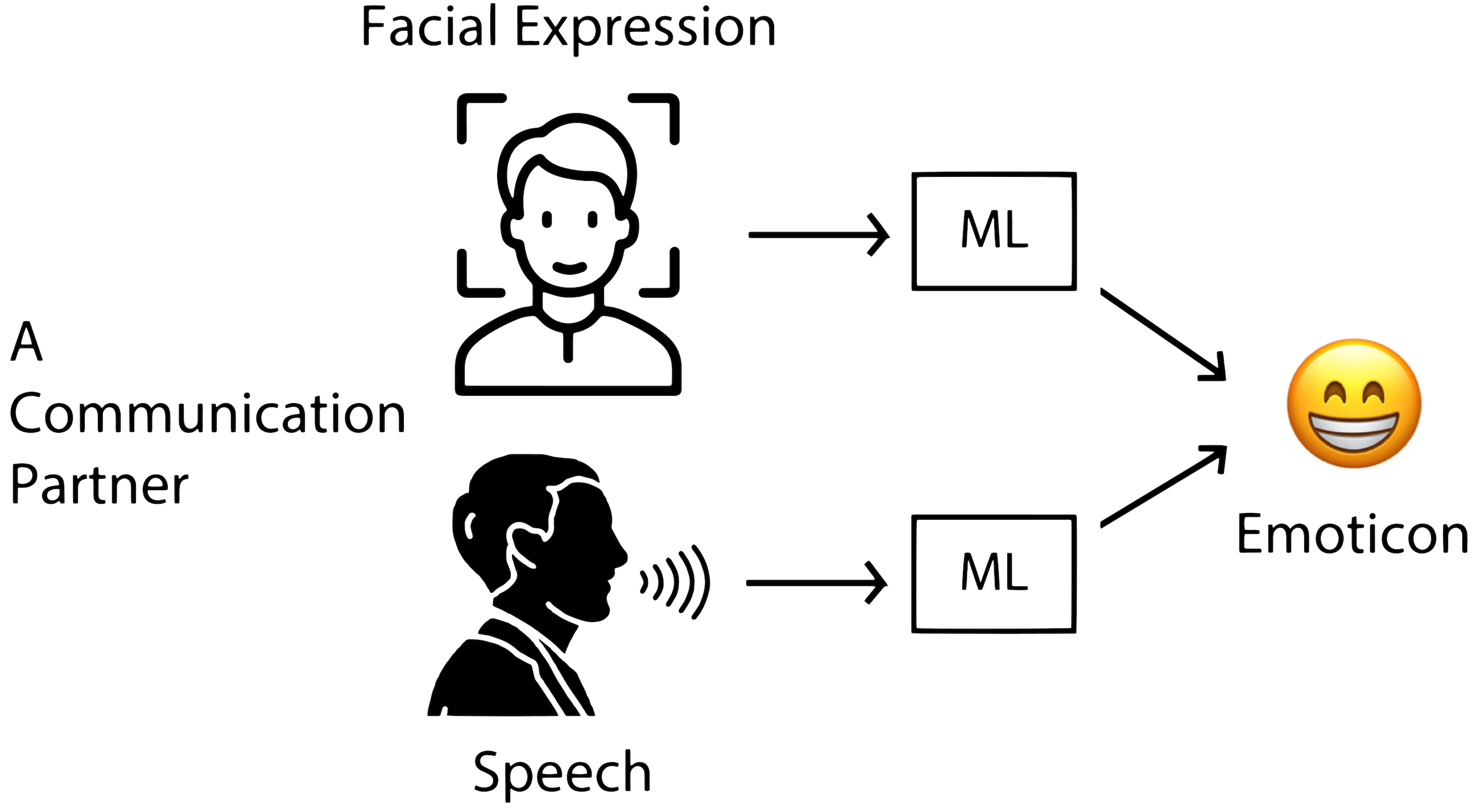

Rezwan Matin, a graduate student pursuing his master’s degree in Engineering conducted research to create a multi-modal emotion recognition model that will be able to identify human emotions in conversations. The model is aimed towards children with Autism Spectrum Disorder (ASD), who struggle to identify human emotions.

Matin is assisting the High-Performance Engineering (HiPE) research group at Texas State with research for this model. Dr. Damian Valles, Assistant Professor and Dr. Vishu Viswanathan from the Ingram School of Engineering and Dr. Maria Resendiz from the department of Communication Disorders are the advisors on the project.

According to Matin, a former Engineering student at Texas State named Inzamam Ul Haque, developed the first working model which can read the facial expression from a person’s face and accurately predict their emotional state. Matin is currently developing the speech emotion recognition model to assist the facial expression recognition model. Both models will ensure a more accurate prediction of emotions.

“I hope that my research will help children with ASD become more comfortable in communicating with those around them. We as humans thrive as a community, and communication is the integral part of any society. Unlike most people, children with ASD have difficulty identifying human emotions, which creates a communication gap. If they can overcome this hurdle, life will be so much easier for them,” said Matin on why he chose this research for his Master’s thesis.

Matin is an international student from Bangladesh and chose to come to the United States to become a better engineer and serve the community. He chose Texas State after being offered the Graduate Instructional Assistantship (GIA) from the Ingram School of Engineering.

“I am thankful to all my professors who provided me with excellent guidance and support. I have made friends in the Graduate program who keep pushing me to better myself every step of the way,” said Matin.

Matin plans to use more machine-learning techniques to improve his model using the Texas State LEAP cluster, hardware that can run multiple experiments at once. Once that is done, the model will be used in clinical trials under the department of Communication Disorders.

Share this article

For more information, contact University Communications:Jayme Blaschke, 512-245-2555 Sandy Pantlik, 512-245-2922 |